Research Impact and Metrics: Author metrics

This Guide will provide you with information about bibliometric indicators of research impact and tools that you can use to access these indicators.

https://pitt.libguides.com/bibliometricIndicators/ArticleLevelMetrics

Author-level metrics

Author-level metrics are citation metrics that measure the bibliometric impact of individual authors. H-index is the best known author-level metric. Since it was proposed by JE Hirsch in 2005 it has gained a lot of popularity amongst researchers while bibliometics scholars proposed a few variants to account for its weaknesses (g-index, m-index are good examples).

Clarivate Analytics provides list of Highly Cited Authors. These are individuals who, in the last 10-year period, boast the highest cumulative number of highly cited papers (papers placing in the top 1% of the distribution) for their publications across 21 broad subject categories. The 2018 edition of Highly Cited lists slightly over 6,078 authors. Essential Science Indicators are a great tool in identifying authors with in the last 10 years received enough citations, in their respective disciplines, to place them in the top 1% of all authors in Web of Science.

Other metrics originally developed for academic journals can be reported at researcher level: author-level eigenfactorand the author impact factor (AIF) are such examples.

h-index and its variants

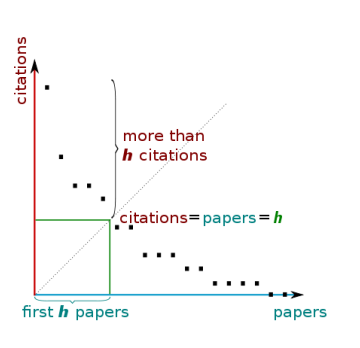

The h-index attempts to measure both the productivity and impact of the published work of a scientist or scholar. The calculation was suggested by an American physicist JE Hirsch and it can be summed up as:

A scientist has an index h if h of his/her Np papers has at least h citations each, and the other (Np h) papers have no more than h citations each.

In other words, to have an h-index of 5, an author has to have 5 publications, each receiving at least 5 citations.

The criticisms of the h-index include the fact that it does not account for highly cited papers (your h-index is the same whether you most highly cited paper has a 100 or 10 citations. Another criticism is that it does not account for the career span of the author – because it is a simple function of productivity and impact, authors with longer career spans (and more publications will always have higher scores).To help with these weaknesses h-index variants had been proposed.

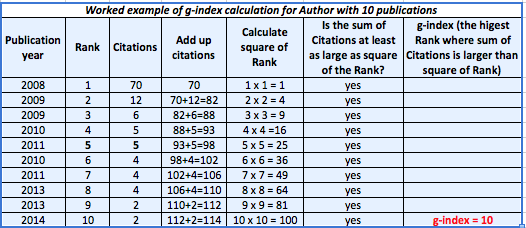

g-index is a variant of the h-index that, in its calculation, gives credit for the most highly cited papers in a data set. In the words of Leo Egghe, its inventor: “Highly cited papers are, of course, important for the determination of the value h of the h-index. But once a paper is selected to belong to the top h papers, this paper is not “used” any more in the determination of h, as a variable over time. Indeed, once a paper is selected to the top group, the h-index calculated in subsequent years is not at all influenced by this paper’s received citations further on: even if the paper doubles or triples its number of citations (or even more) the subsequent h-indexes are not influenced by this.” The g-index is always the same as or higher than the h-index.

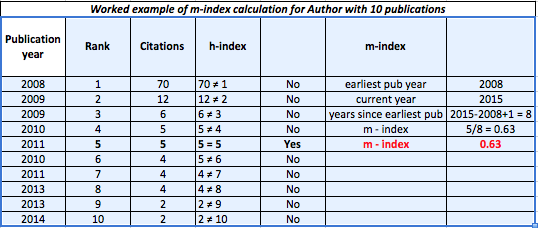

m-index is another variant of the h-index that displays h-index per year since first publication. The h-index tends to increase with career length, and m-index can be used in situations where this is a shortcoming, such as comparing researchers within a field but with very different career lengths. The m-index inherently assumes unbroken research activity since the first publication.

Other author-level metrics

Author Impact Factor (AIF) is the extension of the Impact Factor to authors. The AIF of an author A in year t is the average number of citations given by papers published in year t to papers published by A in a period of Δt years before year t. AIF is capable to capture trends and variations of the impact of the scientific output of scholars in time, unlike the h-index, which is a growing measure taking into account the whole career path. For more information see: Pan, RK; Fortunato, S (2014). “Author Impact Factor: Tracking the dynamics of individual scientific impact”. Scientific Reports 4: 4880.

Author-level Eigenfactor is an attempt to use Eigenfactor methodology (originally developed to rank journals), to create a ranking list of authors in SSRN. For description of the study and list of ranked authors see: West, JD; Jensen, MC; Dandrea, RJ; Gordon, GJ; Bergstrom, CT. (2013). “Author-level Eigenfactor metrics: Evaluating the influence of authors, institutions, and countries within the social science research network community“. Journal of the American Society for Information Science and Technology 64 (4): 787–801.

Where to find your h-index?

There are a number of sources where you can find your h-index. Please note that the value of the index may vary depending on the source of information (number of indexed publications, time span, etc.) For instance, h-index derived from Google Scholar tend to be higher than that based on Web of Science or SCOPUS data.

In Web of Science you can see a calculated h-index for a group of publications (i.e. all publications by an author) through its author search. You can Search by author’s name or enter author’s ORCID or ResearcherID identifier (if known).

SCOPUS has an “author search” form allowing for searching for author names, ORCID and SCOPUS identifiers and institutional affiliations. The return page is an author summary page with a number of author information including h-index.

Google Scholar is another source of h-index information. It requires creation of Google Scholar profile before providing metrics. Refer to our LibGuide on research impact to learn to how create Google Scholar Profile.

Publish or Perish is a freely accessible software program that retrieves and analyzes academic citations. It uses Google Scholar to obtain the raw citations, then analyzes these and presents the following associated metrics:

- Total number of papers and total number of citations

- Average citations per paper, citations per author, papers per author, and citations per year

- Hirsch’s h-index and related parameters

- Egghe’s g-index (g-index)

- The contemporary h-index (m-index)

- The average annual increase in the individual h-index

- The age-weighted citation rate

- An analysis of the number of authors per paper.

Measuring Your Impact: Impact Factor, Citation Analysis, and other Metrics: Citation Analysis

Article-level metrics

Citation-based and altmetric measures can show impact of an individual research publication. They can help answer the following questions:

How many times was an article cited?

How does it compare to other similar papers? (above/below expected count for its discipline, age or publishing outlet)

Is it in the top 0.1%, 1% or 5%, etc. in its discipline? (measure of excellence)

Is it gaining citations at an unusually rapid rate? (measure of early impact)

How it it tracking in social media? (possible indication of later citation performance)

Citation-based article-level indicators

Citation Counts:

In Web of Science, you can see article-level metrics in the “Citation Network” panel (see the screenshot below). You note that this article has been cited 5814 times (citation rate). If the publication places in the top 1% of similar publications (defined by subject area and age), it is given a Highly Cited status (an indicator of excellence). For exceptionally highly cited papers published within last two years, a Hot Paper status is awarded (a good indicator of early impact).

In SCOPUS you will see, in the Metrics window, number of citations received to date, together with percentile position in relation to similar publications (subject area and publication date). Field-weighted citation impact shows how the citation rate compares to the average (baseline) for the similar publication. In the example below, this publicationhas 5986 citations which places it in the 99th percentile of the distribution of all similar publications and shows its field-weighted impact as being 61.59 times above the average for similar publications.

Benchmarks:

If your publication does not have Highly Cited or Hot Paper badge, you can still benchmark its performance against similar documents. Pitt’s subscription to Web of Science, provides access to Essential Science Indicators (ESI) allowing you to understand baselines and citation distributions across across 22 subject categories for papers published in the last ten years. The screenshots below show ESI baseline and percentile tables (updated for May 2019). These tables are updated six times a year. Access, up to date tables here. Also, you can view a short tutorial on how to read and interpret the baselines tables here.

While SCOPUS does not publish baselines or percentiles tables, each publication indexed in SCOPUS shows citation count, citation benchmarking and field-weighted citation impact.

Characteristics of Citing Articles: Often interesting information about the impact of a publication can be gleaned by analyzing characteristics of citing papers.

In Web of Science, from the bibliographic detail page, you can follow the “Times Cited” link to generate a list of citing documents. Then, run “Analyze Results” function for a wealth of information about publications citing your paper. You can understand geographic impact (countries, institutions and individuals citing your research), disciplinary impact (disciplinary mapping of papers citing your research) or range and impact of citing journals. View a short tutorial on how to use “Analyze Results” function in Web of Science.

In SCOPUS, from article bibliographic detail page, follow the link to “view all citing documents”. From there, “Analyze Search Results” It provides a visual analysis of your results broken up into 7 categories (year, source, author, affiliation, country/territory, document type and subject area. Please visit Scopus blog page on Analysis Tools for more information.

Non-citation based article-level indicators

Non-citation based indicators of impact (atlmetrics) can be grouped in a number of ways. We can analyse the usage of the scholarly content measured by views and downloads (form a publisher website or a scholarly networking site) and captures (into a reference management tool). Other attention can be measured by counting blog mentions or tweets or attention in press. PlumX Metrics are the primary source of article-level metrics in Scopus For more information please visit the blog post PlumX Metrics now on Scopus: Discover how others interact with your research and also visit our page on Alternative Metrics.

Journal-level metrics

There are a number of bibliometric indicators focusing on measuring impact of scholarly journals. Most of these measures are calculated from the pool of journals indexed in two citation indexing databases: Web of Science or SCOPUS.

All metrics based on Web of Science-indexed journals can be access via Journal Citation Reports. In addition, Eigenfactor measures can be accessed on the Eigenfactor.org website.

SCOPUS-based metrics can be accessed via SCOPUS platform as well as via CWTS Journal Indicators and Scimago (SJR indicator only) websites.

Many publishers will also provide information about impact of their journals on the journal web pages. See below an example of an Elsevier title showing a number of relevant indicator.

Web of Science-based journal metrics

Journal Impact Factor (JIF) is the best known indicator of journal impact. It was proposed by Eugene Garfield in and is defined as “the average number of times articles from the journal published in the past two years have been cited in the JCR year.” It is calculated by dividing the number of citations in the JCR year by the total number of articles published in the two previous years. For instance, 2014 IF of New England Journal of Medicine is 55.873 and is calculated as follows:

Because JIF values are not normalised for disciplines, it is very difficult to compare the IF values for journals in different disciplines. Moreover, the range of IF values vary widely from discipline to discipline, based on their citation behaviors. For instance, top medical journals would have IF of 50.00 and higher, while top titles in mathematics have IF of around 3.00. For that reason, a good alternative to citing the raw IF value is the information about a quartile position of the title. For instance, Journal of Women’s Health, with IF of 2.050 places in Q1 in Women’s Studies (2nd highest IF value out of 41 titles in the category) but in Q2 in Public, Environmental and Occupational Health (37th rank out of 147 titles in the category) subject categories.

2. From 2007, Journal Citation Reports also provide 5-year Impact Factor values. 2014 5 -year IF of New England Journal of Medicine is 54.39 and is calculated as follows:

SCOPUS-based journal metrics

Source Normalized Impact per Paper (SNIP) measures contextual citation impact by weighting citations based on the total number of citations in a subject field. As a field-normalized metric SNIP offers the ability to benchmark and compare journals from different subject areas. This is especially helpful to researchers publishing in multidisciplinary fields.

SCImago Journal Rank (SJR) is a prestige metric based on the idea that ‘all citations are not created equal’. With SJR, the subject field, quality and reputation of the journal have a direct effect on the value of a citation. It is a size-independent indicator and it ranks journals by their ‘average prestige per article’ and can be used for journal comparisons in the scientific evaluation process.

CiteScore is Elsevier’s answer to JIF. CiteScore for a journal in a given year counts the citations received in that year to documents published in three proceeding years and divides this by the number of documents published in that journal in the same 3-year period. Unlike JIF, document types used in numerator and denominator are the same and include research articles, reviews, conference proceedings, editorials, errata, letters and short surveys. Articles-in-press are not included in the calculation of CiteScore.

See box below for more information about the sources of these journal metrics.

Sources of SCOPUS-based journal indicators

All three SCOUPS-based journal indicators can be found in Compare Sources https://www.scopus.com/source/eval.uri (subscription required).

Other journal level indicator sources

Google Scholar Metrics is another source of journal-level metrics. Based on Google Scholar citations it ranks journals grouped by subject categories or language. The metrics used are: h5-index defined as the h-index for articles published in the last 5 complete years. It is the largest number h such that h articles published in 2010-2014 have at least h citations each, and h5-median for a journal is the median number of citations for the articles that make up its h5-index. The screen shot below shows the Google Scholar ranking for English Language and Literature journals.